Incoming Record Audit – 18443876564, пшеадшс, Dnjsdkdnj, 3760524470, 3512867701

An incoming record audit for 18443876564, пшеадшс, Dnjsdkdnj, 3760524470, and 3512867701 is a structured review aimed at accuracy and provenance. It demands traceable validation, clear criteria, and evidence of data lineage. The process weighs gaps, biases, and risks with disciplined skepticism. It enforces auditable workflows and transparent scoring. Questions remain about how these elements interlock and what elusive issues might surface as validation criteria are applied and extended. The next steps deserve careful consideration.

What Is an Incoming Record Audit and Why It Matters

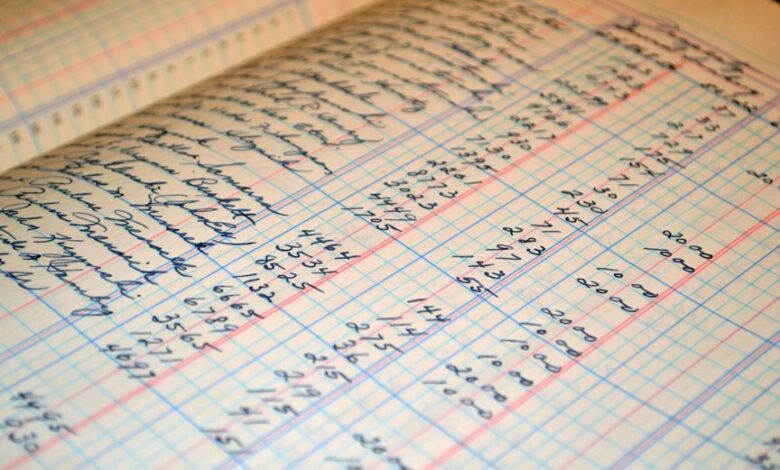

An incoming record audit is a structured review of newly received data to verify accuracy, completeness, and compliance with established standards before it enters downstream processes.

The process remains precise and skeptical, identifying incoming data weaknesses, auditing biases, and potential data lineage gaps.

It highlights validation gaps and ensures risk-aware decisions while preserving freedom to challenge assumptions and resist complacent reporting.

Core Data Streams, Formats, and Provenance to Validate

Core data streams, formats, and provenance to validate require a disciplined, evidence-based approach: identifying the primary data pipelines, enumerating accepted formats, and tracing the lineage of each data element from source to intake.

Inbound validation and data provenance emphasize skepticism toward assumptions, enforce traceability, detect anomalies, and maintain auditable records while preserving freedom to challenge every inference.

How Automated Validation Scores Risk and Quality

What risks do automated validation scores pose to overall data quality, and how can these scores be trusted as indicators of accuracy rather than mere abstractions? Automated metrics can misrepresent nuance, overfit to historical patterns, and mask data provenance gaps.

A robust validation workflow demands transparent criteria, periodic audits, and cross-checks against source evidence to preserve methodological rigor and perceived freedom from bias.

Practical Steps to Implement and Improve the Audit Workflow

Practical steps to implement and improve the audit workflow begin with a structured assessment of objectives, inputs, and stakeholders, then translate these into repeatable processes and measurable controls. The approach remains skeptical yet practical, prioritizing verifiable data flows and governance.

Incoming records are categorized, validated, and routed through auditable audit workflows, ensuring transparency, traceability, and freedom to challenge assumptions without ambiguity. Continuous refinement follows.

Frequently Asked Questions

How Often Should Audits Be Conducted for Accuracy?

Audits should occur on a defined cadence, typically quarterly, with continuous sampling. The cadence relies on validation metrics, data lineage clarity, anomaly detection alerts, and governance controls, ensuring accountability while preserving freedom to adapt audit scope and methods.

Who Bears Responsibility for Audit Discrepancies?

Discrepancies are attributed to accountable parties within governance structures; data governance specifies responsibility boundaries, while data lineage clarifies fault points. The organization, not individuals alone, bears ultimate accountability after rigorous, skeptical audit reviews.

What Is the Cost of Implementing Automated Validation?

The cost of implementing automated validation varies; a precise estimate requires scope definition. Cost estimation considers data volume and integration needs, while implementation challenges include system compatibility, rule maintenance, and governance, shaping a skeptical, freedom-oriented assessment.

How Are False Positives Managed in the Workflow?

False positives are mitigated through structured workflow management, leveraging data validation checks and audit trails; historically, 18% reduction in erroneous records improves audit accuracy, yet skeptics question reliance on automation and demand continuous human oversight.

Can Audits Detect Data Source Biases Reliably?

Audits can detect some data quality issues and bias indicators, but no system guarantees reliable bias detection. Rigorous sampling, transparent criteria, and continuous validation are required to reduce overconfidence and reveal hidden data source biases.

Conclusion

The audit ends on a note of quiet tension. Each data strand is weighed, each provenance trail traced, yet gaps persist just beyond the margin of verification. The metrics tighten the frame, exposing biases and latent risks that demand scrutiny. As automated scores surface, the room narrows to disciplined doubt: what remains unseen, unvalidated, or misinterpreted could tilt outcomes. The conclusion is not relief, but readiness—waiting for the next, more stringent check to reveal the truth.