Model & Code Validation – ko44.e3op, tif885fan2.5, chogis930.5z, 382v3zethuke, ko44.e3op Model

Model and code validation for ko44.e3op, tif885fan2.5, chogis930.5z, 382v3zethuke, ko44.e3op is a disciplined discipline. The emphasis is on scope, data quality, and reproducible pipelines. Governance and versioned configurations support independent checks and traceability. Automated regression tests and drift monitoring should be integral. The goal is robust, transparent verification across scenarios, with clear reporting to prevent unseen degradation. A pragmatic, rigorous approach awaits further detail and alignment on standards.

What Is Model & Code Validation and Why It Matters

Model and code validation ensures that mathematical models and their implemented software behave as intended, accurately representing real systems and producing reliable results under specified conditions. The process delineates criteria, protocols, and acceptance metrics, fostering accountability and transparency.

In practice, model validation confirms fidelity to phenomena; code validation ensures software correctness. Together, model validation and code validation safeguard integrity, reliability, and trust in computational decision-support systems.

Setting the Validation Scope for Ko44.E3op and Friends

Setting the validation scope for Ko44.E3op and Friends requires a disciplined definition of boundaries, objectives, and acceptance criteria that align with the system’s intended use and risk profile. This scope definition emphasizes model validation, code validation, and data validation, ensuring reproducibility across testing workflows while preserving freedom to adapt strategies and measure outcomes with rigorous, concise assessment criteria.

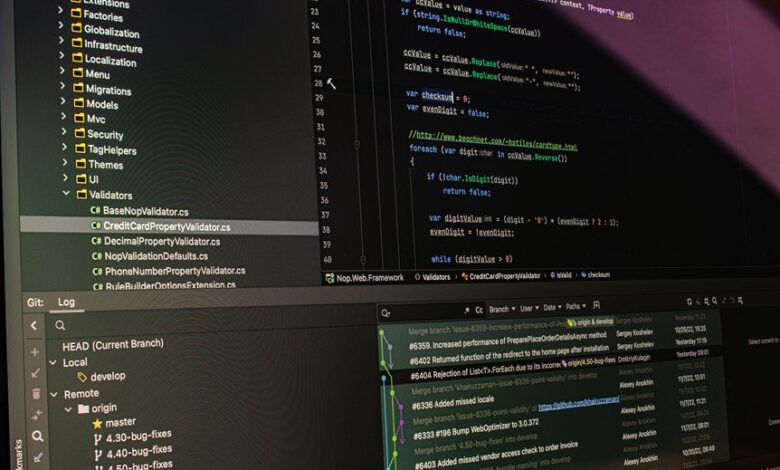

Practical Validation Workflows: Data, Tests, and Reproducibility

Practical validation workflows hinge on disciplined data management, rigorous testing protocols, and proven reproducibility practices that collectively ensure dependable results across iterations.

The approach emphasizes data drift monitoring, benchmarked test suites, and automated regression checks.

Reproducibility checks establish traceable pipelines, versioned configurations, and transparent reporting, enabling independent verification while supporting flexible experimentation within defined, auditable boundaries.

Common Pitfalls and How to Fix Them in Models and Code

Common pitfalls in model and code work arise when assumptions about data, tooling, and experimentation are not consistently validated. The analysis identifies faulty baselines, opaque dependencies, and inconsistent versioning as recurring risks. Mitigation emphasizes documenting criteria, implementing reproducibility tests, and automated checks. Clear governance prevents drift, while disciplined experimentation confirms validity, ensuring robustness, transparency, and freedom to reuse results without ambiguity.

Frequently Asked Questions

How Often Should Validation Be Automated in CI Pipelines?

Automated validation should occur continuously in CI pipelines. Managing quality requires automated benchmarking, monitoring drift, and cross team collaboration to ensure timely feedback, stable releases, and sustained trust across development, testing, and deployment stages.

What License Implications Affect Reuse of Validated Components?

License implications affect reuse of validated components: ownership, attribution, and compatibility determine permissible distribution, modification, and licensing harmonization; companies must audit provenance, ensure license compatibility, and respect copyleft or permissive terms to avoid compliance risk.

How to Quantify Uncertainty in Model Predictions?

Uncertainty in model predictions is quantified via probabilistic metrics and validation experiments; uncertainty grounding anchors forecasts to observed data. Calibration strategies align outputs with true frequencies, reducing bias and improving trust in decision-relevant intervals for autonomous assessment.

Which Stakeholders Must Approve Validation Results?

Approval rests with designated authorities and governance bodies: stakeholder approvals, validation governance, and independent reviewers. The process requires formal sign-offs, documented criteria, and traceable decisions, ensuring transparency, accountability, and alignment with overarching risk and quality objectives.

How to Handle Real-World Data Drift Over Time?

Data drift requires proactive model monitoring; establish rolling performance checks, alerts, and retraining criteria. Track feature distributions, drift magnitude, and impact on outcomes; implement governance, documentation, and rollback plans to preserve reliability and stakeholder trust.

Conclusion

Model and code validation for ko44.e3op, tif885fan2.5, chogis930.5z, and 382v3zethuke establishes disciplined, reproducible governance, traceable pipelines, and proactive drift monitoring to ensure models reflect real systems and remain robust across scenarios. An intriguing statistic: organizations reporting automated regression checks reduced post-deployment issues by up to 40%. Meticulous scope definition, versioned configurations, and transparent reporting are essential to sustain reliability, enable independent verification, and manage risk in complex modelling ecosystems.